编辑推荐

最新发布

今日更新0篇

文章总数953篇

日入千刀之路(10):AI量化之DeepSeek、Grok、Claude、ChatGPT、Gemini优缺点评价

1、发现很多人使用过一种AI后,比如Kimi、豆包等,就一直用,不怎么用其他AI。 其实各个AI都有非常分明的…

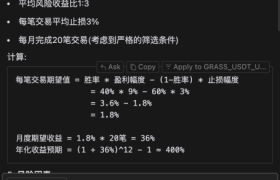

日入千刀之路(8):AI量化交易之AI能为我们赚钱吗?让Claude和DeepSeek预估一下我的策略年化…

1、AI应用到任何行业、任何领域都是以提效为核心。 2、对于AI最常提到”降本增效“这个词,其实,能不能降本真…

日入千刀之路(5):制作AI Agent的最强工具,Claude Sonet 3.7 + Cursor + MCP

1、只会回答问题的,只是AI。能自主调用工具为你解决问题的,叫AI Agent. 2、Claude刚发布了 S…